Write queries with exports StarterEnterpriseEnterprise +

Exports enhance saved queries by running your saved queries and writing the output to a table or view within your data platform. Saved queries are a way to save and reuse commonly used queries in MetricFlow, exports take this functionality a step further by:

- Enabling you to write these queries within your data platform using the dbt job scheduler.

- Proving an integration path for tools that don't natively support the Semantic Layer by exposing tables of metrics and dimensions.

Essentially, exports are like any other table in your data platform — they enable you to query metric definitions through any SQL interface or connect to downstream tools without a first-class Semantic Layer integration. Running an export counts towards queried metrics usage. Querying the resulting table or view from the export does not count toward queried metric usage.

Prerequisites

- You have a dbt account on a Starter or Enterprise-tier plan.

- You use one of the following data platforms: Snowflake, BigQuery, Databricks, Redshift, or Postgres.

- You are on dbt version 1.7 or newer.

- You have the Semantic Layer configured in your dbt project.

- You have a dbt environment with the job scheduler enabled.

- You have a saved query and export configured in your dbt project. In your configuration, leverage caching to cache common queries, speed up performance, and reduce compute costs.

- You have the dbt CLI installed. Note, that exports aren't supported in Studio IDE yet.

Benefits of exports

The following section explains the main benefits of using exports:

Considerations

Exports offer many benefits and it's important to note some use cases that fall outside the advantages:

- Business users may still struggle to consume from tens, hundreds, or thousands of tables, and choosing the right one can be a challenge.

- Business users may also make mistakes when aggregating and filtering from the pre-built tables.

For these use cases, use the dynamic Semantic Layer APIs instead of exports.

Run exports

Before you're able to run exports in development or production, you'll need to make sure you've configured saved queries and exports in your dbt project. In your saved query config, you can also leverage caching with the dbt job scheduler to cache common queries, speed up performance, and reduce compute costs.

There are two ways to run an export:

- Run exports in development using the dbt CLI to test the output before production (You can configure exports in the Studio IDE, however running them directly in the Studio IDE isn't supported yet).

- If you're using the Studio IDE, use

dbt buildto run exports. Make sure you have the environment variable enabled.

- If you're using the Studio IDE, use

- Run exports in production using the dbt job scheduler to write these queries within your data platform.

Exports in development

You can run an export in your development environment using your development credentials if you want to test the output of the export before production.

This section explains the different commands and options available to run exports in development.

-

Use the

dbt sl exportcommand to test and generate exports in your development environment for a singular saved query. You can also use the--selectflag to specify particular exports from a saved query. -

Use the

dbt sl export-allcommand to run exports for multiple saved queries at once. This command provides a convenient way to manage and execute exports for several queries simultaneously, saving time and effort. -

If you're using the Studio IDE, use

dbt buildto run exports. Make sure you have the environment variable enabled before running the command.

Exports for single saved query

Use the following command to run exports in the dbt CLI:

dbt sl export

The following table lists the options for dbt sl export command, using the -- flag prefix to specify the parameters:

| Loading table... |

You can also run any export defined for the saved query and write the table or view in your development environment. Refer to the following command example and output:

dbt sl export --saved-query sq_name

The output would look something like this:

Polling for export status - query_id: 2c1W6M6qGklo1LR4QqzsH7ASGFs..

Export completed.

Use the select flag

You can have multiple exports for a saved query and by default, all exports are run for a saved query. You can use the select flag in development to select specific or multiple exports. Note, you can’t sub-select metrics or dimensions from the saved query, it’s just to change the export configuration i.e table format or schema

For example, the following command runs export_1 and export_2 and doesn't work with the --alias or --export_as flags:

dbt sl export --saved-query sq_name --select export_1,export2

Overriding export configurations

The --select flag is mainly used to include or exclude specific exports. If you need to change these settings, you can use the following flags to override export configurations:

--export-as— Defines the materialization type (table or view) for the export. This creates a new export with its own settings and is useful for testing in development.--schema— Specifies the schema to use for the written table or view.--alias— Assigns a custom alias to the written table or view. This overrides the default export name.

Be careful. The --select flag can't be used with alias or schema.

For example, you can use the following command to create a new export named new_export as a table:

dbt sl export --saved-query sq_number1 --export-as table --alias new_export

Exports for multiple saved queries

Use the command, dbt sl export-all, to run exports for multiple saved queries at once. This is different from the dbt sl export command, which only runs exports for a singular saved query. For example, to run exports for multiple saved queries, you can use:

dbt sl export-all

The output would look something like this:

Exports completed:

- Created TABLE at `DBT_SL_TEST.new_customer_orders`

- Created VIEW at `DBT_SL_TEST.new_customer_orders_export_alias`

- Created TABLE at `DBT_SL_TEST.order_data_key_metrics`

- Created TABLE at `DBT_SL_TEST.weekly_revenue`

Polling completed

The command dbt sl export-all provides the flexibility to manage multiple exports in a single command.

Exports in production

Enabling and executing exports in dbt optimizes data workflows and ensures real-time data access. It enhances efficiency and governance for smarter decisions.

Exports use the default credentials of the production environment. To enable exports to run saved queries and write them within your data platform, perform the following steps:

- Set an environment variable in dbt.

- Create and execute export job run.

Set environment variable

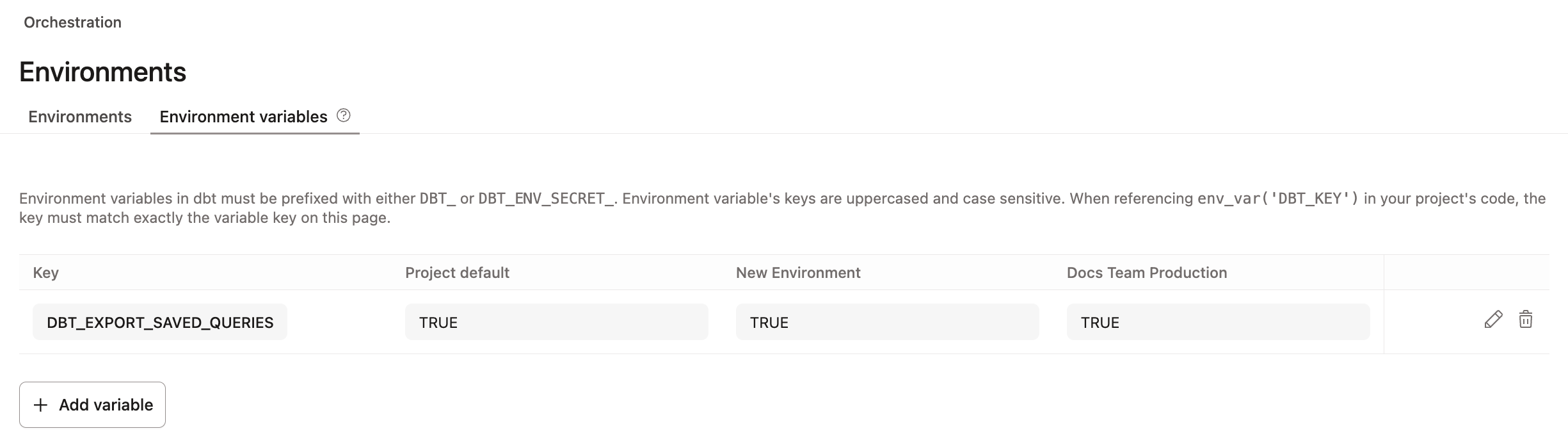

- Click Deploy in the top navigation bar and choose Environments.

- Select Environment variables.

- Set the environment variable key to and the environment variable's value to

TRUE.

Doing this ensures saved queries and exports are included in your dbt build job. For example, running dbt build -s sq_name runs the equivalent of dbt sl export --saved-query sq_name in the dbt Job scheduler.

If exports aren't needed, you can set the value(s) to FALSE ().

When you run a build job, any saved queries downstream of the dbt models in that job will also run. To make sure your export data is up-to-date, run the export as a downstream step (after the model).

Create and execute exports

-

Create a deploy job and ensure the environment variable is set, as described in Set environment variable.

- This enables you to run any export that needs to be refreshed after a model is built.

- Use the selector syntax

--selector-soption in your build command to specify a particular dbt model or saved query to run. For example, to run all saved queries downstream of theorderssemantic model, use the following command:

dbt build --select orders+ -

After dbt finishes building the models, the MetricFlow Server processes the exports, compiles the necessary SQL, and executes this SQL against your data platform. It directly executes a "create table" statement so the data stays within your data platform.

-

Review the exports' execution details in the jobs logs and confirm the export was run successfully. This helps troubleshoot and to ensure accuracy. Since saved queries are integrated into the dbt DAG, all outputs related to exports are available in the job logs.

-

Your data is now available in the data platform for querying! 🎉

FAQs

Related docs

Was this page helpful?

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.